- Before you begin

- Managing access

- Getting started

- Integrations

- Working with process apps

- Working with dashboards and charts

- Working with process graphs

- Working with Discover process models and Import BPMN models

- Showing or hiding the menu

- Context information

- Export

- Filters

- Sending automation ideas to UiPath® Automation Hub

- Tags

- Due dates

- Compare

- Conformance checking

- Process simulation

- Root cause analysis (Preview)

- Simulating automation potential

- Starting a Task Mining project from Process Mining

- Triggering an automation from a process app

- Viewing Process data

- Process Insights (preview)

- Creating apps

- Loading data

- Transforming data

- Autopilot™ for SQL (preview)

- Structure of transformations

- Tips for writing SQL

- Exporting and importing transformations

- Viewing the data run logs

- Merging event logs

- Configuring Tags

- Configuring Due dates

- Configuring fields for Automation potential

- Activity Configuration: Defining activity order

- Making the transformations available in dashboards

- Data models

- Adding and editing processes

- Customizing dashboards

- Publishing process apps

- App templates

- Notifications

- Additional resources

Process Mining user guide

Folder structure

The information on this page is only applicable for app templates that have a due dates configuration files and a seeds\ folder.

The transformations of a process app consist of a dbt project. The following table describes the contents of a dbt project folder.

| Folder/file | Contains |

|---|---|

dbt_packages\ | the pm_utils package and its macros. |

macros\ | optional folder for custom macros |

models\ | .sql files that define the transformations. |

models\schema\ | .yml files that define tests on the data. |

seeds | .csv files with configuration settings. |

dbt_project.yml | the settings of the dbtproject. |

The Event log and Custom process app templates have a simplified data transformations structure. Process apps created with these app templates do not have this folder structure.

dbt_project.yml

The dbt_project.yml file contains settings of the dbt project which defines your transformations. The vars section contains variables that are used in the transformations.

Date/time format

Each app template contains variables that determine the format for parsing date/time data. These variables have to be adjusted if the input data has a different date/time format than expected.

Data transformations

The data transformations are defined in .sql files in the models\ directory. The data transformations are organized in a standard set of sub directories.

Check out Structure of transformations for more information.

The .sql files are written in Jinja SQL, which allows you to insert Jinja statements inside plain SQL queries. When dbt runs all .sql files, each .sql file results in a new view or table in the database.

Typically, the .sql files have the following structure: Select * from {{ ref('Table_A') }} Table_A.

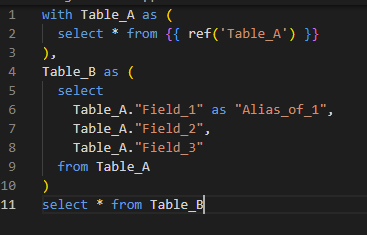

The following code shows an example SQL query.

select

tableA."Field_1" as "Alias_1",

tableA."Field_2",

tableA."Field_3"

from {{ ref('tableA') }} as tableA

select

tableA."Field_1" as "Alias_1",

tableA."Field_2",

tableA."Field_3"

from {{ ref('tableA') }} as tableA

In some cases, for process apps created with earlier versions of the app templates, the .sql files have the following structure:

- With statements: One or more with statements to include the required sub tables.

{{ ref(‘My_table) }}refers to table defined by another .sql file.{{ source(var("schema_sources"), 'My_table') }}refers to an input table.

-

Main query: The query that defines the new table.

-

Final query: Typically a query like

Select * from tableis used at the end. This makes it easy to make sub-selections while debugging.

For more tips on how to write transformations effectively, refer to Tips for writing SQL.

Adding source tables

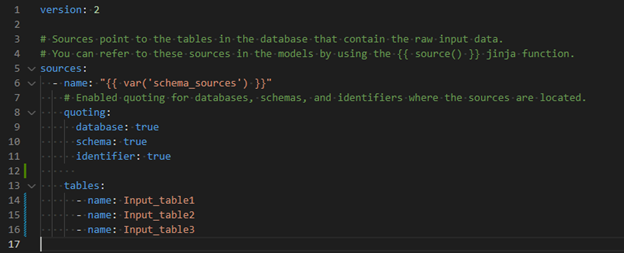

When you upload a new input file, a new source table is automatically added in the models\schema\sources.yml in the dbt project. This way, other models can refer to it by using {{ source(var("schema_sources"), 'My_table') }}. Check outManaging input data for more information on how to configure input tables.

The following illustration shows an example.

For more detailed information, refer to the official dbt documentation on Sources.

Data output

The data transformations must output the data model that is required by the corresponding app; each expected table and field must be present.

If you want to add new fields to you process app, you can add these fields in the transformations.

Macros

Macros make it easy to reuse common SQL constructions. For detailed information, refer to the official dbt documentation on Jinja macros.

pm_utils

The pm-utils package contains a set of macros that are typically used in Process Mining transformations. For more info about the pm_utils macros, check out ProcessMining-pm-utils.

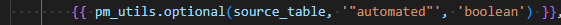

The following illustration shows an example of Jinja code calling the pm_utils.optional() macro.

Seeds

Seeds are csv files that are used to add data tables to your transformations. For detailed information, refer to the official dbt documentation on jinja seeds.

In Process Mining, this is typically used to make it easy to configure mappings in your transformations.

After editing seed files, run the file by selecting Run file or Run all, to update the corresponding data table.

Check out Activity Configuration: Defining activity order and Simulating automation potential for examples of using seeds files.

Tests

The models\schema\ folder contains a set of .yml files that define tests. These validate the structure and contents of the expected data. For detailed information, refer to the official dbt documentation on tests.

Dbt projects

Data transformations are used to transform input data into data suitable for Process Mining. The transformations in Process Mining are written as dbt projects.

This pages gives an introduction to dbt. For more detailed information, refer to the official dbt documentation.

pm-utils package

Process Mining app templates come with a dbt package called pm_utils. This pm-utils package contains utility functions and macros for Process Mining dbt projects. For more info about the pm_utils , refer to ProcessMining-pm-utils.

Updating the pm-utils version used for your app template

UiPath® constantly improves the pm-utils package by adding new functions.

When a new version of the pm-utils package is released, you are advised to update the version used in your transformations, to make sure that you make use of the latest functions and macros of the pm-utils package.

You find the version number of the latest version of the pm-utils package in the Releases panel of the ProcessMining-pm-utils.

Follow these steps to update the pm-utils version in your transformations.

- Download the source code (zip) from the release of

pm-utils. - Extract the

zipfile and rename to folder to pm_utils. - Export transformations from the inline Data transformations editor and extract the files.

- Replace the pm_utils folder from the exported transformations with the new pm_utils folder.

- Zip the contents of the transformations again and import them in the Data transformations editor.