- Overview

- Getting started

- Activities

- Insights dashboards

- Document Understanding Process

- Quickstart tutorials

- Framework components

- Model Details

- Overview

- Document Understanding - ML package

- DocumentClassifier - ML package

- ML packages with OCR capabilities

- 1040 - ML package

- 1040 Schedule C - ML package

- 1040 Schedule D - ML package

- 1040 Schedule E - ML package

- 1040x - ML package

- 3949a - ML package

- 4506T - ML package

- 709 - ML package

- 941x - ML package

- 9465 - ML package

- ACORD125 - ML package

- ACORD126 - ML package

- ACORD131 - ML package

- ACORD140 - ML package

- ACORD25 - ML package

- Bank Statements - ML package

- Bills Of Lading - ML package

- Certificate of Incorporation - ML package

- Certificate of Origin - ML package

- Checks - ML package

- Children Product Certificate - ML package

- CMS 1500 - ML package

- EU Declaration of Conformity - ML package

- Financial Statements - ML package

- FM1003 - ML package

- I9 - ML package

- ID Cards - ML package

- Invoices - ML package

- Invoices Australia - ML package

- Invoices China - ML package

- Invoices Hebrew - ML package

- Invoices India - ML package

- Invoices Japan - ML package

- Invoices Shipping - ML package

- Packing Lists - ML package

- Payslips - ML package

- Passports - ML package

- Purchase Orders - ML package

- Receipts - ML package

- Remittance Advices - ML package

- UB04 - ML package

- Utility Bills - ML package

- Vehicle Titles - ML package

- W2 - ML package

- W9 - ML package

- Other Out-of-the-box ML Packages

- Public endpoints

- Traffic limitations

- OCR Configuration

- Pipelines

- OCR services

- Supported languages

- Deep Learning

- Data and security

- Licensing and Charging Logic

Document Understanding classic user guide

The ability to train extractors and classifiers is now more convenient by using Document UnderstandingTM product (rather than the AI Center service), by leveraging the One Click Extraction and the One Click Classification features.

Minimal dataset size For successfully running a Training pipeline, we strongly recommend minimum 10 documents and at least 5 samples from each labeled field in your dataset. Otherwise, the pipeline throws the following error: Dataset Creation Failed. Training on GPU vs CPU For larger datasets, you need to train using GPU. Moreover, using a GPU for training is at least 10 times faster than using a CPU. For the maximum dataset size depeding on the version and infrastructure, check the table below.

Table 1. Maximum dataset for each version

| Infrastructure | <2021.10.x | 2021.10.x | >2021.10.x |

|---|---|---|---|

| CPU | 500 pages | 5000 pages | 1000 pages |

| GPU | 18,000 pages | 18,000 pages | 18,000 pages |

If you are encountering failed pipelines when training large datasets, we recommend upgrading to ML packages version 24.4 or newer. The most recent versions provide stability enhancements, which could significantly reduce these issues. For more information on dataset structure, check the Dataset format section.

There are two ways to train an ML model:

- training a model from scratch

- retraining an out-of-the-box model

Training a model from scratch can be done using the DocumentUnderstanding ML package, which does this on the dataset provided as input.

Retraining can be done using out-of-the-box ML packages such as Invoices, Receipts, Purchase Orders, Utility Bills, Invoices India, Invoices Australia etc., basically, any other data extraction ML Package except for DocumentUnderstanding. Training using one of these packages has one additional input: a base model. We refer to this as retraining because you are not starting from scratch but from a base model. This approach uses a technique called Transfer Learning where the model takes advantage of the information encoded in another model, the preexisting one. The model retains some of the out-of-the-box knowledge, but it learns from the new data too. However, as your training dataset size increases, the pretrained base model matters less and less. It is mainly relevant for small to medium size training datasets (up to 500-800 pages).

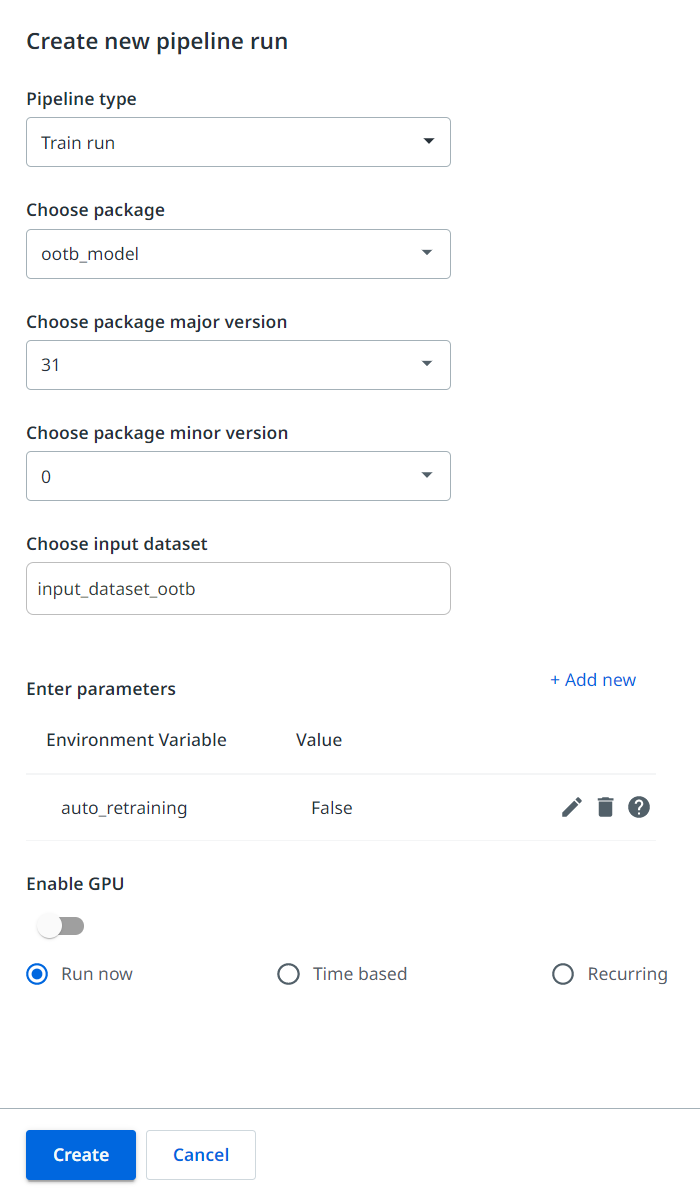

Configure the training pipeline as follows:

-

In the Pipeline type field, select Train run.

-

In the Choose package field, select the package you created based on the DocumentUnderstanding ML Package.

-

In the Choose package major version field, select a major version for your package.

-

In the Choose package minor version field, select a minor version for your package. Check the Choosing the minor version section below for more information.

-

In the Choose input dataset field, select a dataset as shown in the video below on this page. For building high quality training datasets, you can check this tutorial.

-

In the Enter parameters section, enter any environment variables defined, and used by your pipeline, if any. For most use cases, no parameter needs to be specified; the model is using advanced techniques to find a performant configuration. However, here are some environment variables you could use:

-

auto_retrainingwhich allows you to complete the Auto-retraining Loop; if the variable is set to True, then the input dataset needs to be the export folder associated with the labeling session where the data is tagged; if the variable remains set to False, then the input dataset needs to correspond to the dataset format. -

model.epochswhich customizes the number of epochs for the Training Pipeline (the default value is 100).Optional.Note:For larger datasets, containing more than 5000 pages, you can initially perform a full pipeline run with the default number of epochs. This allows you to evaluate the model’s accuracy. After that, you can decrease the number of epochs to about 30-40. This approach allows you to compare the accuracy of the results and determine if the reduction of epochs yields comparable precision. When using smaller datasets, in particular those with fewer than 5000 pages, you can maintain the default number of epochs.

-

For ML Packages v23.4 or higher, training on datasets smaller than 400 pages uses an approach called Frozen Backbone to accelerate the training and improve performance. However, you have the option to override this behavior and force Full Training even for smaller datasets, or conversely, to force Frozen Backbone training even for larger datasets (up to a maximum of 3000 pages). You can use the following environment variables, with the condition to combine them when in use, either use the first and the second, or the first and the third variables together.

Optional.model.override_finetune_freeze_backbone_mode=True- Include this Environment Variable in order to override the default behavior. This is required in both of the situations below.model.finetune_freeze_backbone_mode=True- Include this Environment Variable in order to force model to use Frozen Backbone even for larger datasets.model.finetune_freeze_backbone_mode=False- Include this Environment Variable in order to force model to use Full Training even for smaller datasets.

-

Select whether to train the pipeline on GPU or on CPU. The Enable GPU slider is disabled by default, in which case the pipeline is trained on CPU.

-

Select one of the options when the pipeline should run: Run now,Time based or Recurring. In case you are using the

auto_retrainingvariable, select Recurring.

-

After you configure all the fields, click Create. The pipeline is created.

Here is an example of creating a new Training Pipeline with a dataset previously exported to AI Center:

Choosing the minor version

In most situations, minor version 0 should be chosen. This is because the larger and more diverse your training dataset, the better your model's performance. This principle aligns with the current state-of-the-art ML technology's goal of using large, high-quality, and representative training sets. Therefore, as you accumulate more training data for a model, you should add the data to the same dataset to further enhance the model's performance.

There are situations, however, where training on a minor version other than 0 makes sense. This is typically the case when a partner needs to service multiple customers in the same industry, but UiPath® doesn't have a pre-trained model optimized for that industry, geography, or document type.

In such a case, the partner might develop a pre-trained model using a variety of document samples from that industry (not from a single source, but from many for better generalization). This model would be used as a base model to train specific customer models, being trained on version 0 of the ML package. Following versions, like version 1, would be used to refine either the pre-trained model or create customer-specific models.

However, to obtain good results, the pre-trained model should be unbiased and based on a highly diverse training set. If the base model is optimized for a specific customer, it may not perform well for other customers. In such a case, using the zero minor version as a base model yields better results.